Recent discussions position context graphs as a foundational abstraction for agentic AI and a trillion-dollar opportunity. These discussions are a great starting point, but they are incomplete. Richer context matters for agents. However, the core challenge is not recovering “what happened,” or even explaining “why an action was taken.”

How do we build a foundation for stateful reasoning about intent and outcomes in environments — including consumer-centric settings — where behavior is strategic, adaptive, and shaped by latent objectives? To unlock the true potential of the trillion dollar opportunity in AI — and to create a credible path toward AlphaGo-like learning dynamics (i.e., where the AI system came up with a counterintuitive but highly effective move that stumped experts) — we need to address three key aspects:

- Market coverage

- Technology capability

- Longer-term evolution toward superhuman optimization

The Three Gaps: Market, Technology, and Superhuman Optimization

1. Market Coverage: Does the same approach work for all types of agents?

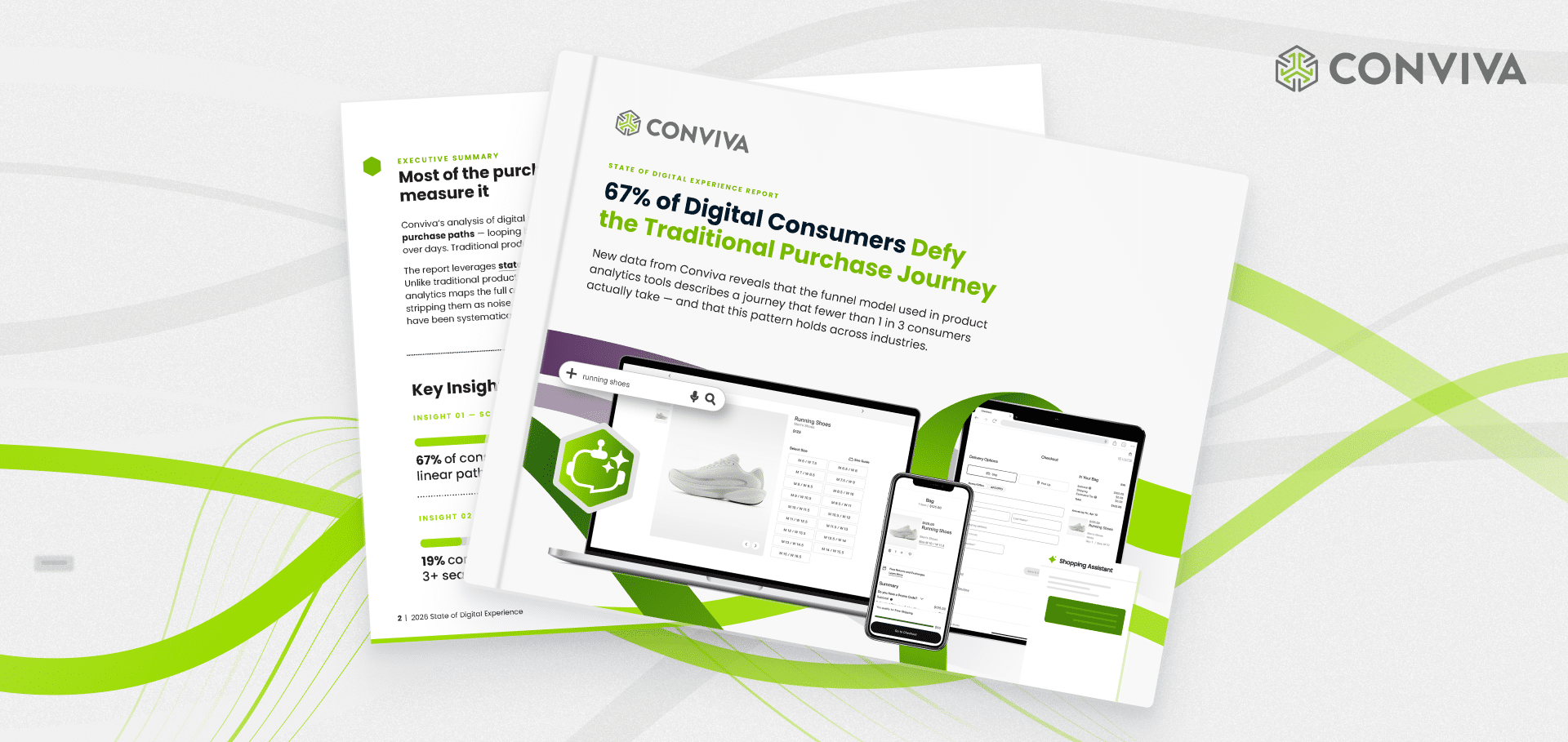

Much of today’s discussion implicitly centers on enterprise-centric agents. At the same time, a major segment of the market involves consumer-centric agents, including consumer-facing agents on digital storefronts; emerging agent actors that work on behalf of consumers; and the interaction among human consumers and these two types of agents.

2. Technology Capabilities: Are graphs sufficient to extract meaningful outcomes?

Graphs are an incomplete abstraction to capture the essence of the decision logic. What we really need are expressive stateful reasoning systems that can extract business logic from the observations, can model the stateful contextual behaviors of users/user agents, and tie these to customer-impacting business outcomes.

3. Evolution: What is the pathway to an AlphaGo moment?

In the medium to long term, the opportunity should extend beyond reconstructing “why” humans did what they did to get to the root of understanding consumer/business intent, and learning to optimize outcomes through exploration, feedback, and adaptation via AI.

Market Gap: Expanding the Lens to Consumer-Centric Agents

In industry, the term “agent” covers a wide range of systems. Likewise, the discussion around context graphs has been vague regarding what specific types of agents we are discussing and what the specific needs of different kinds of agents are.

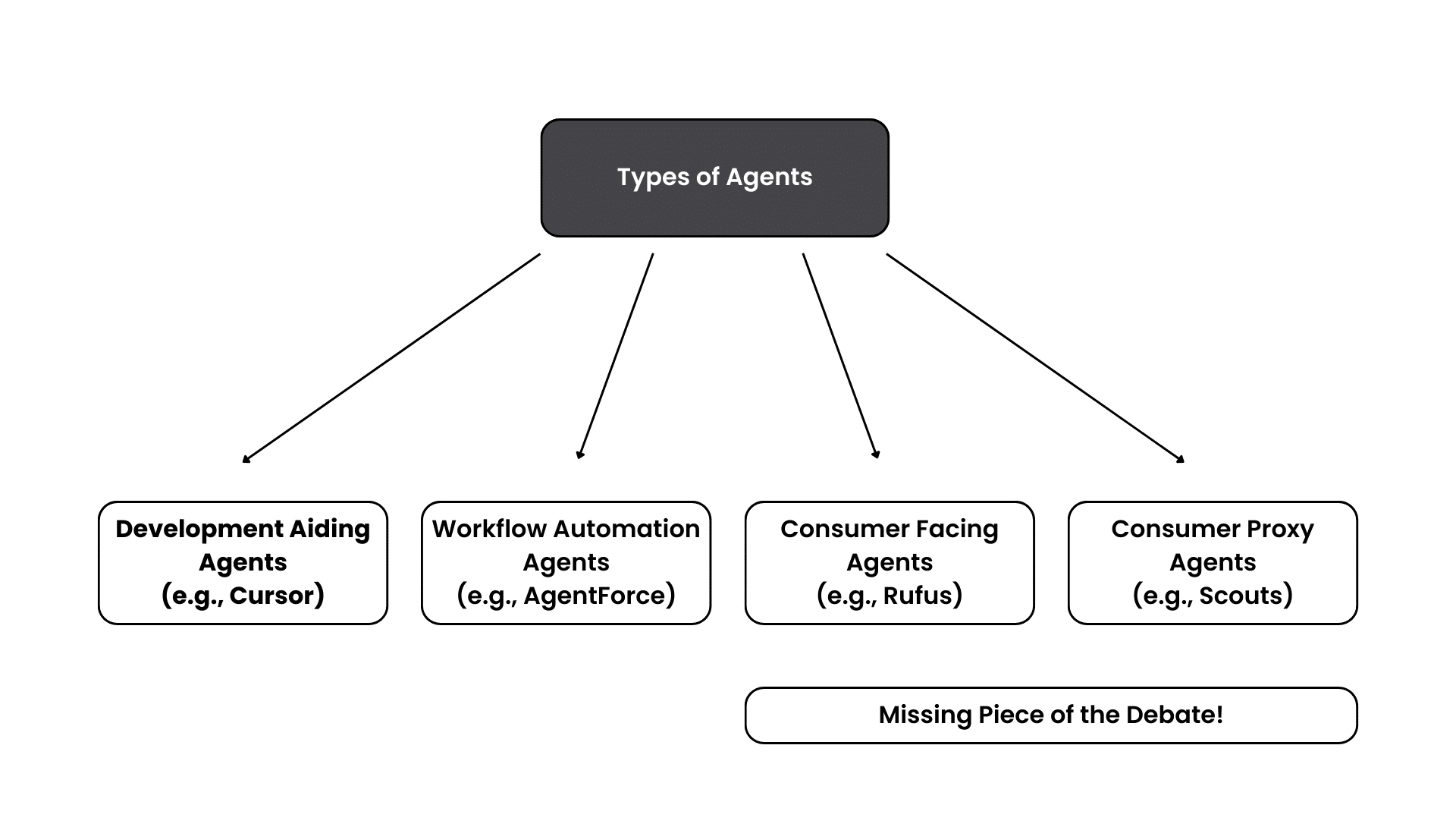

To a first-order approximation, we can divide the world of agentic deployments into four broad classes as shown in the figure below

- Developer Assistants — these are the Cursor and Claude Code or OpenHands-type agents that help with developer productivity.

- Workflow Automation Agents — these are agents that look at canonical enterprise business processes and try to create automated agentic versions of existing enterprise workflows.

- Consumer-Facing Agents — these include customer service agents and shopping assistant agents which are deployed in a wide range of digital storefronts as assistants to help customers.

- Consumer Proxy Agents — these include browser agents and other agents like Scouts that act as “proxies” on behalf of consumers.

At a high level, we can view the first two classes as more “enterprise-centric” agents and the last two classes as “consumer-centric” agents. Many current context graph discussions focus on the first two categories of enterprise-centric agents. Consumer-facing agents in support, shopping, and travel operate in a fundamentally different environment from enterprise/internal agents. The user population is more open-world: consumers vary widely in goals, context, and interaction styles. Tolerance for friction is often lower, because each interaction directly affects brand trust and revenue.

Expanding the lens to consumer-centric deployments — especially to human consumers and consumer proxy agents interacting with merchant agents embedded in digital web and apps — helps surface additional requirements around state, strategy, incentives, and outcomes. These capabilities will in turn help the future and more powerful enterprise agents, help to fully unlock the “Trillion Dollar Opportunity” and the “Trillion Dollar Technical/Business Challenges” involved.

Technology Gap: “Context Graphs” Need Stateful Outcome-Centric Reasoning

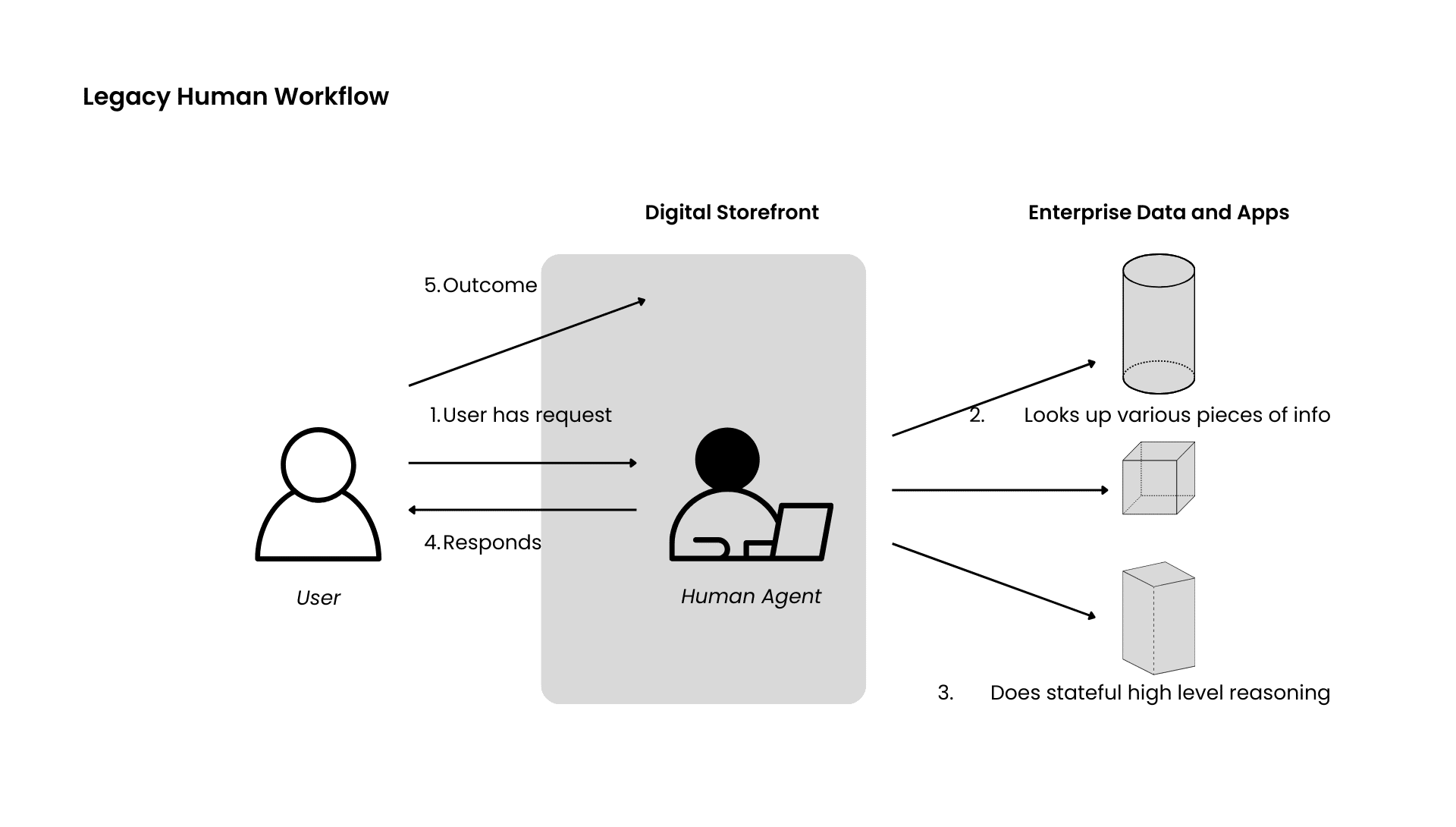

Let us consider a typical user-consumer agent interaction in a traditional setting today. The user has some query (e.g., bookings or discounts or refunds or status of an order) and the human agent (e.g., via chat interface) will respond to that user query by looking up its internal documentation and other enterprise data to answer the question. The human agent workflow in this case will involve some high-level logical stateful reasoning about both the behavior and the intent and behavior of the customer, such as, what were they searching for, what type of product, what is the history of the customer and the contextual information from the enterprise data (e.g., status of orders, available discounts).

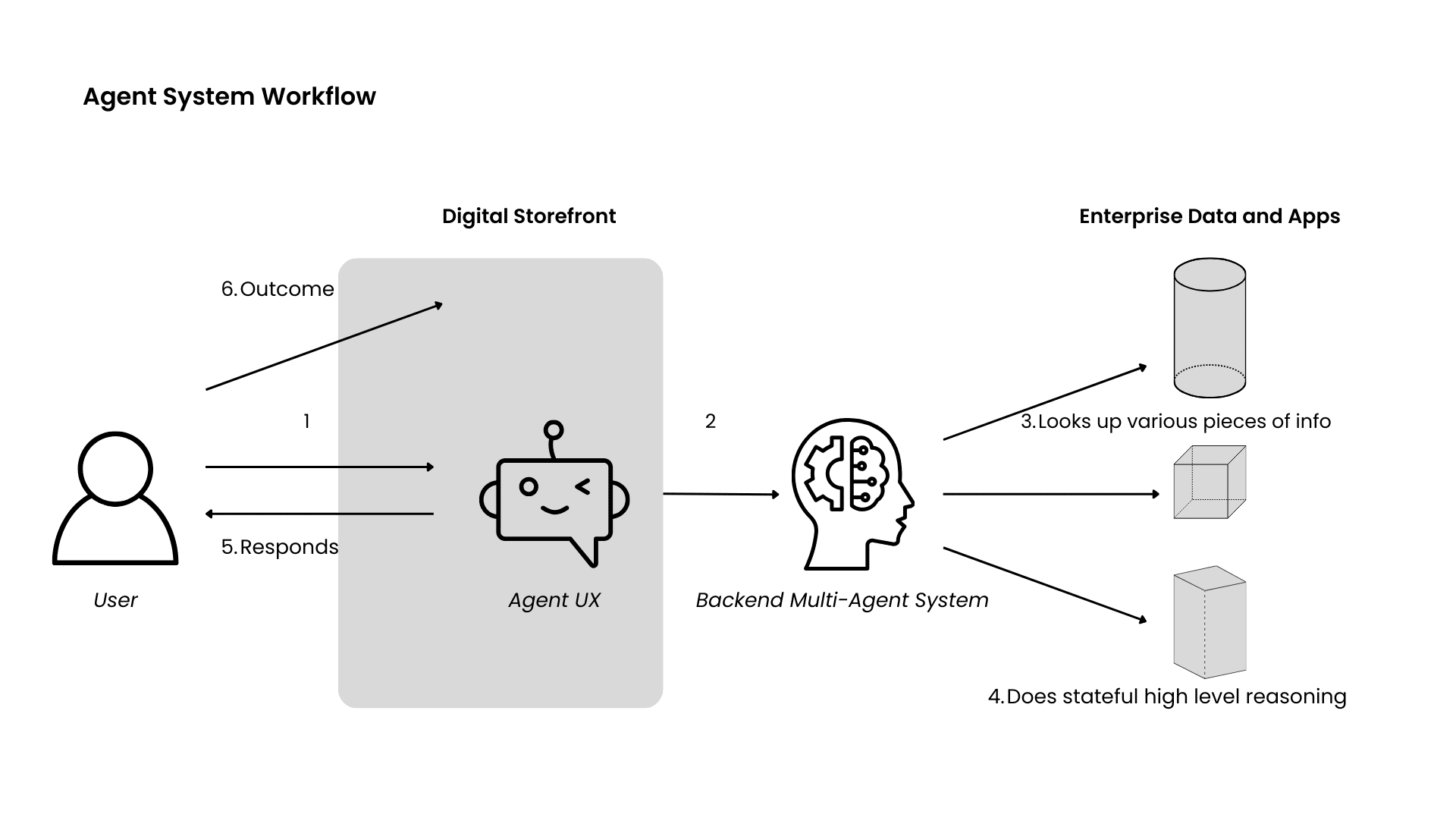

Now, consider that we want to evolve from a human agent workflow to an AI-agent workflow to both improve the storefront’s outcomes (e.g., cost, revenue) and the user’s outcomes (e.g., product satisfaction, consumer experience, reduce frustration).

The current debate has done a fabulous job of articulating that this “migration” from the human workflow to the agentic workflow needs to not just understand “what” the human agent did, but needs more contextual understanding on “why” the human agent did what it did. This framing of the need for “context graphs” has sparked a significant debate on the need for better observability and the need for better reasoning systems among others.

While we completely agree on the need for the “why,” in the consumer-centric agent context, we also believe that the debate is missing a key element on the technology capabilities needed to capture this “why”!

We suggest that the key to unlocking the “why” will need new kinds of stateful reasoning capabilities that can capture: (a) the stateful, contextual behavior of the human consumer (e.g., what was the sequence of actions the user was doing in the app, what was their browsing pattern just before talking to the agent, what was the trajectory of purchase behavior of the customer in the past); (b) the stateful business context (e.g., how much was the shipment delayed after the order was made, what is the business impact of users after receiving a discount, what is the state of the inventory over time).

By stateful, we mean that we not only need to look at the instantaneous data (i.e., like classical data processing) in the system, but really need to look at the sequence, history, trajectory, and timing patterns to accurately make sense of the data to infer intents, inform decisions, and drive outcomes!

This is not merely collecting the telemetry (e.g., backend agent traces or workflow logs) and modeling it as a “graph” of some kind, it requires deeper understanding analysis to extract semantic behavioral intents and outcomes and their business contexts and extracting insightful stateful patterns.

Evolution To SuperHuman: From Mimicry to Understanding for Optimizing Outcomes

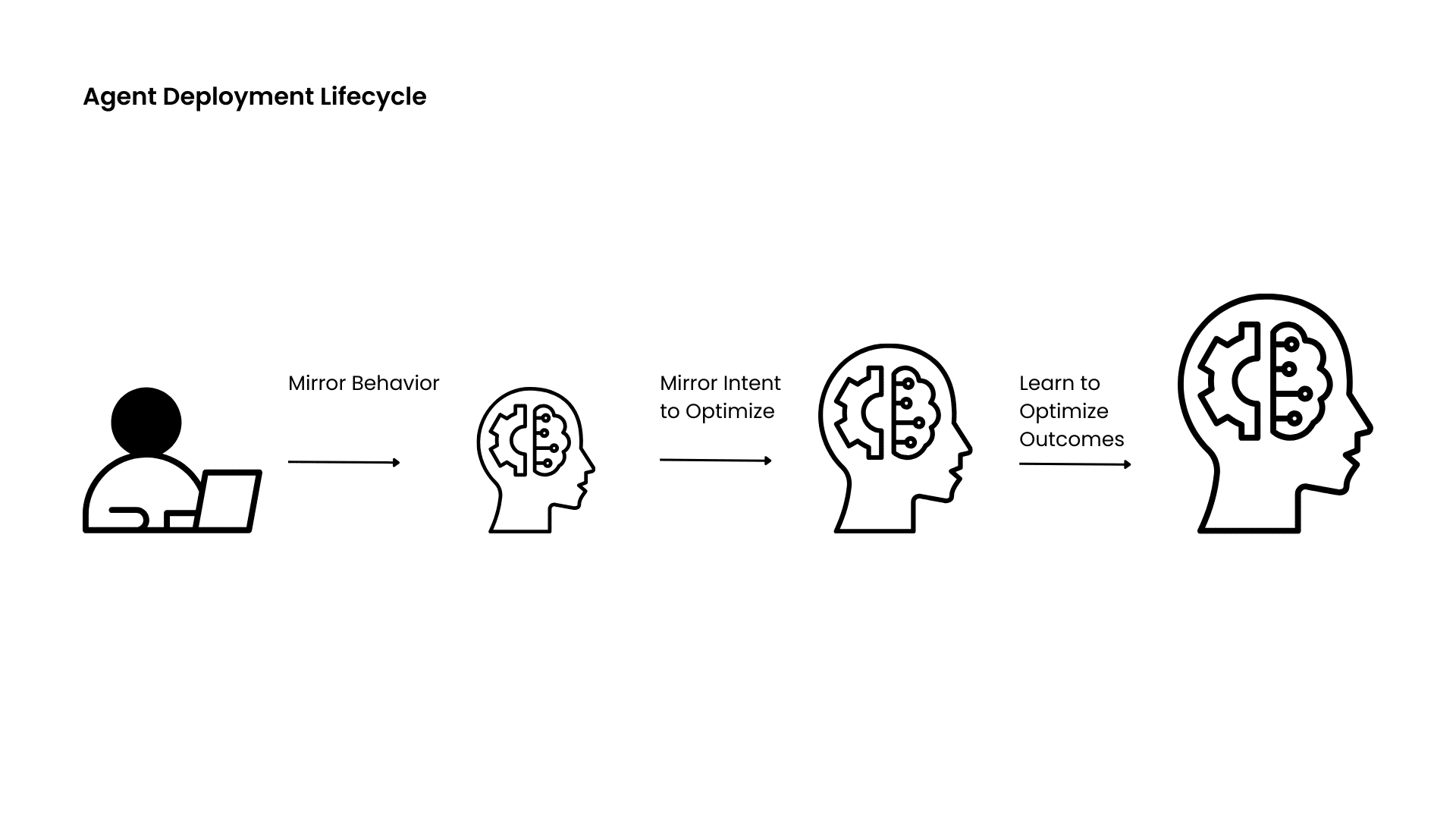

Think of the “AlphaGo” moment when the AI player came up with a counterintuitive but effective move that stumped the human experts — analogously, an agentic system may come up with a superior strategy using creative exploration that a human search (bounded by human compute and memory) may not reveal!

In this respect, we believe that the current discussion in the “context graph” debate is potentially missing a bigger medium-to-long-term opportunity. Our goal should not be merely to create decision traces to get to the why and “mimic” what a human agent did in their workflow. Rather, we should look beyond the “current workflow” that may be restricted by human computation, memory, and imagination to the future where the agentic workflow can learn behavioral and business intents and learn to optimize them on its own.

We can envision an evolutionary lifecycle for both the “consumer-facing agent” and the “consumer-proxy agent” in our taxonomy above:

- Phase 1: Starting with a human, we initially try to mimic the reasoning behind the human workflow and mimic the behavior. This is mostly where the current debate ends.

- Phase 2: We move beyond behavioral mimicry to understand the user intent and optimize the agent. Rather than just reasoning about why the human did something, we can have an agent understand the behavioral intent and business outcome intents and try to find new avenues or other data sources to optimize them together.

- Phase 3: We move into a self-learning and self-adaptive phase where the agent knowing the intent and optimization can continuously evolve to learn to optimize outcomes on behalf of the consumers and the digital storefronts they interact with.

As this “evolutionary” path rolls out, we argue that the “stateful reasoning engine” that we discussed above will become even more critical. It’s the key to unlocking an understanding of the behavioral outcomes, business outcomes, and their interactions!

Summary:

The heated debate around Context Graphs has hit the nail on the head that we need to move beyond just collecting data on “what” happened to create a foundational basis to reason about “why” something happened.

However, the debate remains incomplete without addressing key gaps in markets, technology, and evolution. These gaps include: (1) consumer-centric agents — those deployed by enterprises to assist consumers and those deployed by consumers to act as proxies on their behalf. We emphasize consumer-centric agents for two reasons: They represent significant business opportunities that have received insufficient attention, and they present critical technical challenges that will help shape the design of future, more sophisticated enterprise agents. (2) The creation of new analytical engines capable of interpreting collected data in a stateful, contextual manner and linking it to business outcomes. (3) A broader time horizon that looks beyond the immediate future to a “superhuman” future, in which both sides of consumer-centric agents optimize for outcomes rather than merely replicate existing workflows.